Introduction

Microsoft recently granted the world a sneak-peek into one of the server blades powering its highly-anticipated Project xCloud service during their Game Stack Live stream last month. Cramming 8 Xboxes into a 2U server is no mean feat, so we thought we’d see what we could glean from the short video.

Project xCloud server blade CAD model.

Xbox Units (MB1-8)

Project xCloud console from 2019 interview with Phil Spencer. Note the inset RJ45 connector and M.2 slot.

Something that jumps out immediately is Microsoft have doubled the number of Xbox One S units in each blade from 4 to 8 (MB1-8) since they last let us catch a glimpse of the hardware in a 2018 teaser video. Of course to do this they have had to switch to a larger 2U chassis.

These Xboxes are completely custom — the boards have been purpose-built for Project xCloud.

We noticed something interesting while watching the video: the RJ45 port (used for the console’s network connection) is inset into the board. On consumer Xboxes, this port is flush against the edge to allow an ethernet cable to be plugged in easily. After a bit more digging, we found a Fortune interview with Microsoft’s Phil Spencer from mid-2019 which shows the board. These Xboxes are completely custom — the boards have been purpose-built for Project xCloud. Of course this was already known (Digital Foundry reported on this back in December 2019), it just doesn’t seem to have been widely reported.

Another interesting detail is the inclusion of what appears to be an unpopulated M.2 slot (typically used for SSDs) in the corner of each board. This is interesting for two reasons: 1) The Xbox One S only came with SATA. 2) The slot is unpopulated. Let’s take a look at each of these in turn.

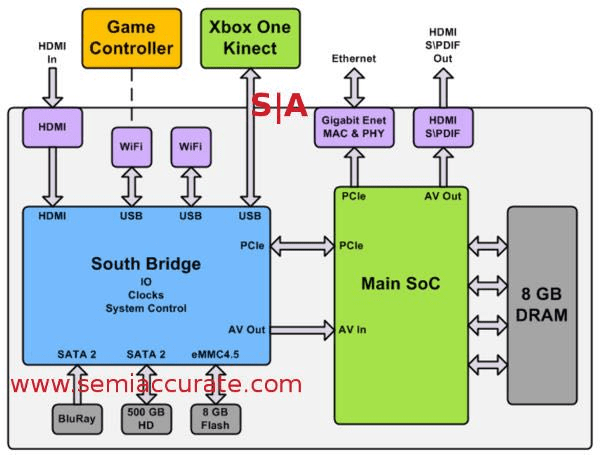

Xbox One system architecture diagram from Hot Chips 25. PCIe is used to connect the Xbox SoC to both the South Bridge and NIC, while SATA is connects the BD drive and HDD.

Both the keying, and the silkscreen outline lead us to believe that this connector is indeed M.2, not mSATA. While M.2 is capable of carrying PCIe, it seems more likely that they are just utilising the existing SATA bus, but using the M.2 form-factor to save space on the board1. The Xbox One S may have some PCIe lanes spare (or possibly they bifurcated the lanes connecting the southbridge), however “spare” is not generally something we see when we are talking about console hardware.

Quite whether the slot is populated in production, we don’t know. It’s possible that the slot was used for bringup and that later firmware added support for netbooting, rendering dedicated storage redundant. However without any dedicated storage, game assets must be streamed over the network, vying for bandwidth with the encoded video and controller input streams. We consider it much more likely that this particular server didn’t just have any SSDs installed when it was demoed.

Given the effort of designing a custom board, we find it strange that they didn’t opt for a 4U design, where each console is oriented vertically and plugged directly into the backplane, increasing capacity and making installation and servicing easier.

Networking / IO (FPB)

Project xCloud Front Panel Board for network IO.

The Front Panel Board (FPB) hosts all the network functionality, acting as a central nervous system of sorts. An array of RJ45 ports around the periphery funnels the ethernet connections from each of the Xbox units into the two QSFPs accessible from the front of the server — we presume that each Xbox gets its own dedicated lane on the QSFP and thus, its own dedicated port on whatever the server is cabled to.

Two heatsinks can be seen, which we assume are covering 4/4 port Ethernet PHYs for converting between 1000BASE-T from the Xbox to QSGMII required for the QSFP modules. We find it unlikely that the FPB performs any switching since the QSFPs are wide enough for each Xbox to have its own dedicated lane; link negotiation should ensure that both sides run at 1Gb/s.

Moving further along the front-panel, we can see that Microsoft have preserved the Windows logo and status indicator LEDs found on the original 1U blade. Finally on the far-right we see an RJ45 port for remote management (MGMT). It’s hard to trace the cable for this port, but we would guess that it pops out on the Power Management Board (PMB) labelled as PBM.

Management Controller

Project xCloud Management Controller — the “brain” of the xCloud server blade.

The management board is the most mysterious thing in here, and still has us scratching our heads. Confusingly, there are three subtly-different acronyms used on this board:

- PBM

- PBMC

- PMBC

P is power, B is board and C is either controller or connector. m is possibly management, however mb could be mainboard; but that doesn’t explain the inconsistent spelling.

Our best guess is the following:

- PBM — Power Board Management. Ethernet connection from power board to MGMT port on front-panel.

- PBMC — Power Board Management Controller. Connection from FPB to microcontroller handling power delivery and monitoring (management controller).

- PMBC — we’re honestly not sure what this stands for. While it is labelled RJ45 PMBC, it actually terminats at both ends with a 3+ pin female connector. Our guess is that this powers some integrated magnetics in the RJ45 management port, but it’s a bit of a stretch.

The most noticeable connector here is the giant yellow-and-black input power connector on the bottom-right, which takes power from the PSU and distributes it to the PWR connectors around the periphery of the board feeding each of the Xboxes. The distinctive yellow power cables will be familiar to anyone who’s dug around the insides of a PC — they’re for delivering the Xbox One S’ 12V input.

In the centre of the board are two wide connectors which handle DATA for four consoles each. We’re uncertain quite what is travelling over these wires, but we presume it’s a low-speed management bus of some sort for controlling each console and monitoring voltages / temperature to ensure the system is healthy. We don’t believe that any video data is being sent over this bus — that looks to be squarely handled by the 1GbE link between each console and the FPB.

A FAN CONN cable carries power, sense and control signals to the eight 2U fans at the rear of the server. We would guess that the fans are temperature-controlled, and not just running full-kilt.

There is one cable that we can’t quite determine its purpose: ?MBUS, which looks like it connects to the PSU underneath. Our guess is that this is PMBus, and is used for monitoring / controlling the PSU.

Finally, there’s a low-power IC near the bottom of the board in the centre, which is the brains of the entire board: the Management Controller (MC). The markings are too difficult to make out, so it’s hard to say much more about this part. It seems to have an integrated ethernet MAC / PHY, and probably has some ADCs for voltage monitoring. The board also has a coin-cell battery which is most likely backing some volatile configuration memory to retain it in the event of power-loss.

Power / Thermals

Beyond the Xbox units, there’s very little else which contributes to the power budget in any meaningful way. A quick back-of-the-napkin calculation puts the power budget at around 800W (assuming a pessimistic 100W per Xbox, based on the 79W worst-case figure measured by Digital Foundry). Digital Foundry previously reported that the custom xCloud boards were slightly more power efficient due to the “Hovis Method” used to more acutely-tune the power profile of each individual console. At the same time, the CPU runs with a slight overclock to compensate for some of the extra stream processing the console is performing.

The Xbox One X on the other hand is significantly more power-hungry than the One S. Anandtech managed to reach 172W, almost 100W higher than the worst-case figure Digital Foundry hit on the One S. We can only assume that this is the reason Microsoft are not currently using the One X for Project xCloud.

Closing Thoughts

Nothing we have presented should come as a surprise, but it was interesting to dig into how everything is connected nonetheless. The takeaway points:

- Microsoft have custom-designed consoles specifically for use with Project xCloud.

- Use of M.2 should in theory allow for faster loading times.

- Greatly-increased power consumption makes it uncertain whether we’ll see an Xbox One X used for Project xCloud.

Footnotes

- The original version of this analysis overlooked the fact that M.2 carries both PCIe and SATA, reasoning about how Microsoft may have found some spare PCIe lanes to use.